How to Actually Choose an AI Agent Platform

The decision framework, interoperability protocols, open-source alternatives, and industry-specific guidance for pharma, healthcare, and manufacturing.

Previously in Part 1

I broke down the five major platforms fighting for enterprise AI agents: Databricks Custom Agents, Salesforce Agentforce, Microsoft Copilot Studio, AWS Bedrock AgentCore, and Google Vertex AI. Each has clear strengths and clear lock-in risks.

If you missed it: Part 1

Part 1 told you what each platform does. This part tells you which one to pick and more importantly, what matters beyond the platform itself.

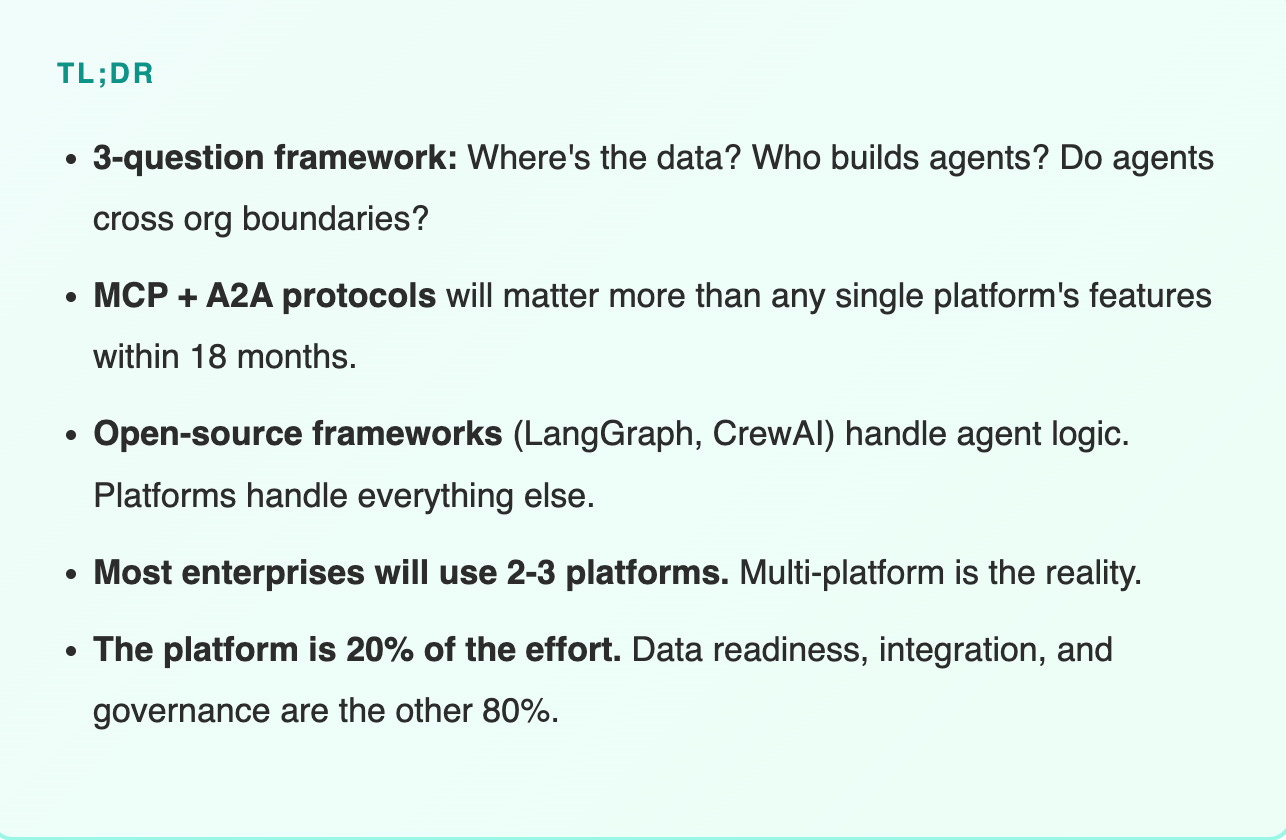

The 3-Question Decision Framework

After deploying AI agents across multiple enterprise environments, I’ve learned that platform selection comes down to three questions, not fifteen.

Feature comparison matrices are comforting. They make you feel like you’re being thorough. But they optimize for the wrong thing. They compare what platforms can do, not what they should do for your specific situation.

Here’s what actually drives the decision:

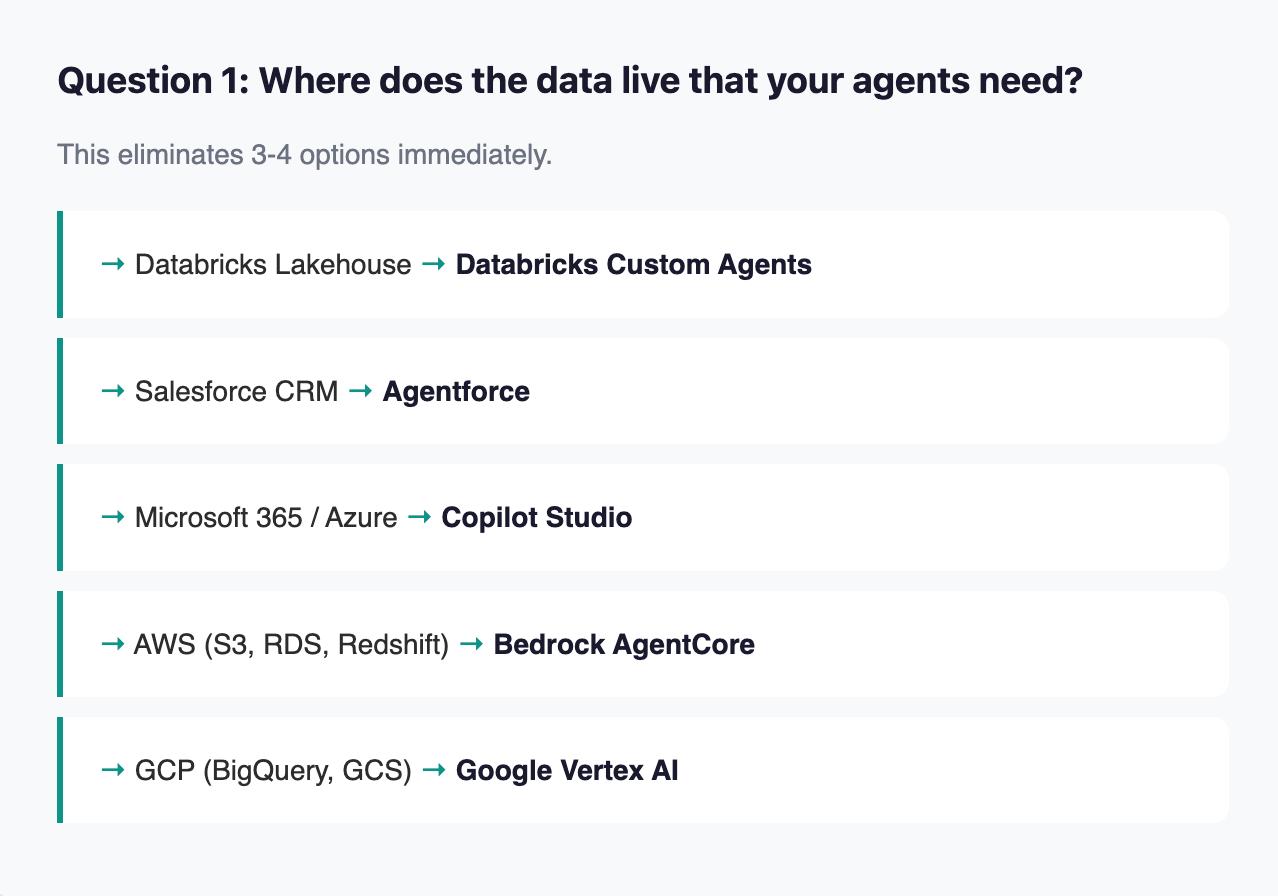

This isn’t laziness, it’s physics. An AI agent’s value is directly proportional to its proximity to the data it needs. Every extra hop between agent and data adds latency, integration complexity, and failure points. In production, these add up fast.

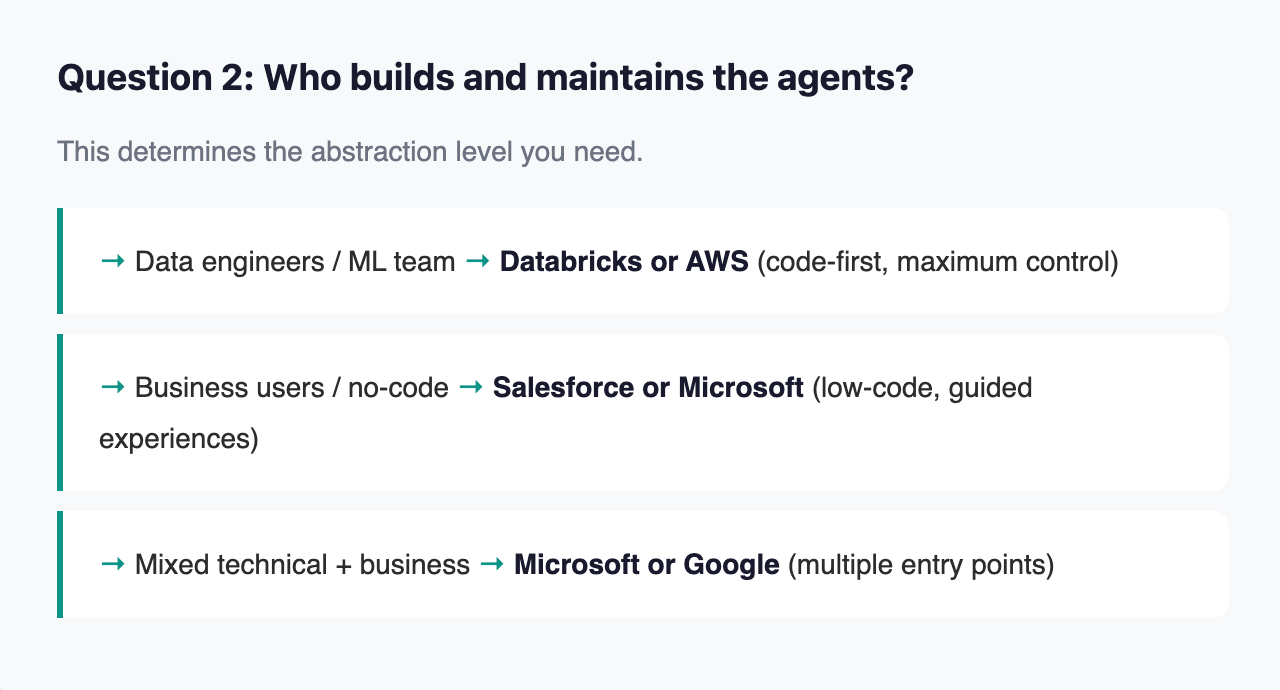

A common mistake: choosing a developer-first platform and then expecting business users to build agents. Or choosing a no-code platform and then being frustrated when your ML team can’t customize the reasoning layer. Match the platform to the people, not the other way around.

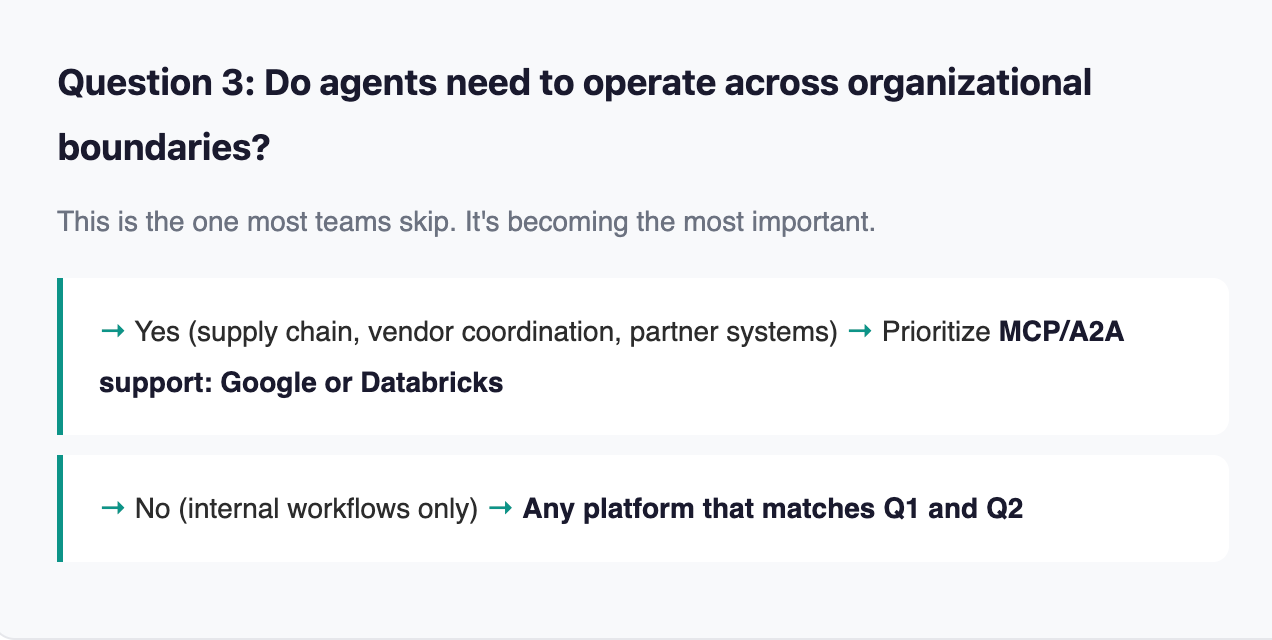

If your answer is “not yet, but probably within 18 months” - treat it as a yes. Protocol support is much easier to start with than to retrofit.

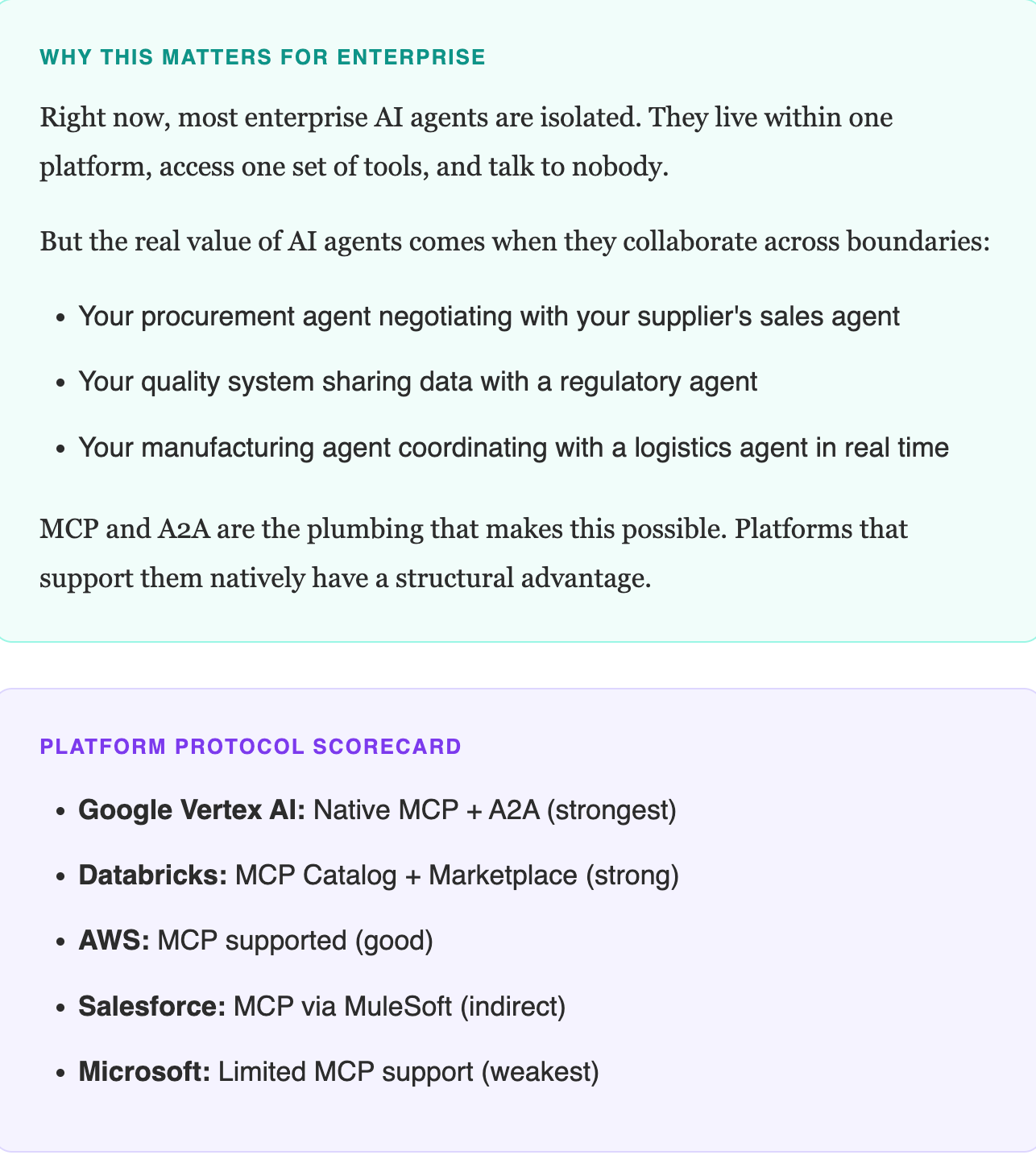

The Interoperability Question: MCP vs. A2A

This is the section most platform comparisons skip. It might be the most important part for the long term.

Two protocols are emerging as standards for AI agent communication:

MCP (Model Context Protocol)

Created by Anthropic. Handles agent-to-tool communication. Think of it as the USB standard for AI agents - a universal way for agents to connect to tools and data sources. Already has 10,000+ active servers and 97 million monthly SDK downloads. This is not theoretical. It’s infrastructure.

A2A (Agent-to-Agent Protocol)

Created by Google. Handles agent-to-agent collaboration. Agents discover each other, negotiate capabilities, and coordinate tasks across organizational boundaries.

Both protocols are now governed by the Linux Foundation’s Agentic AI Foundation, co-founded by OpenAI, Anthropic, Google, Microsoft, AWS, and Block. These aren’t proprietary standards anymore. They’re becoming industry infrastructure.

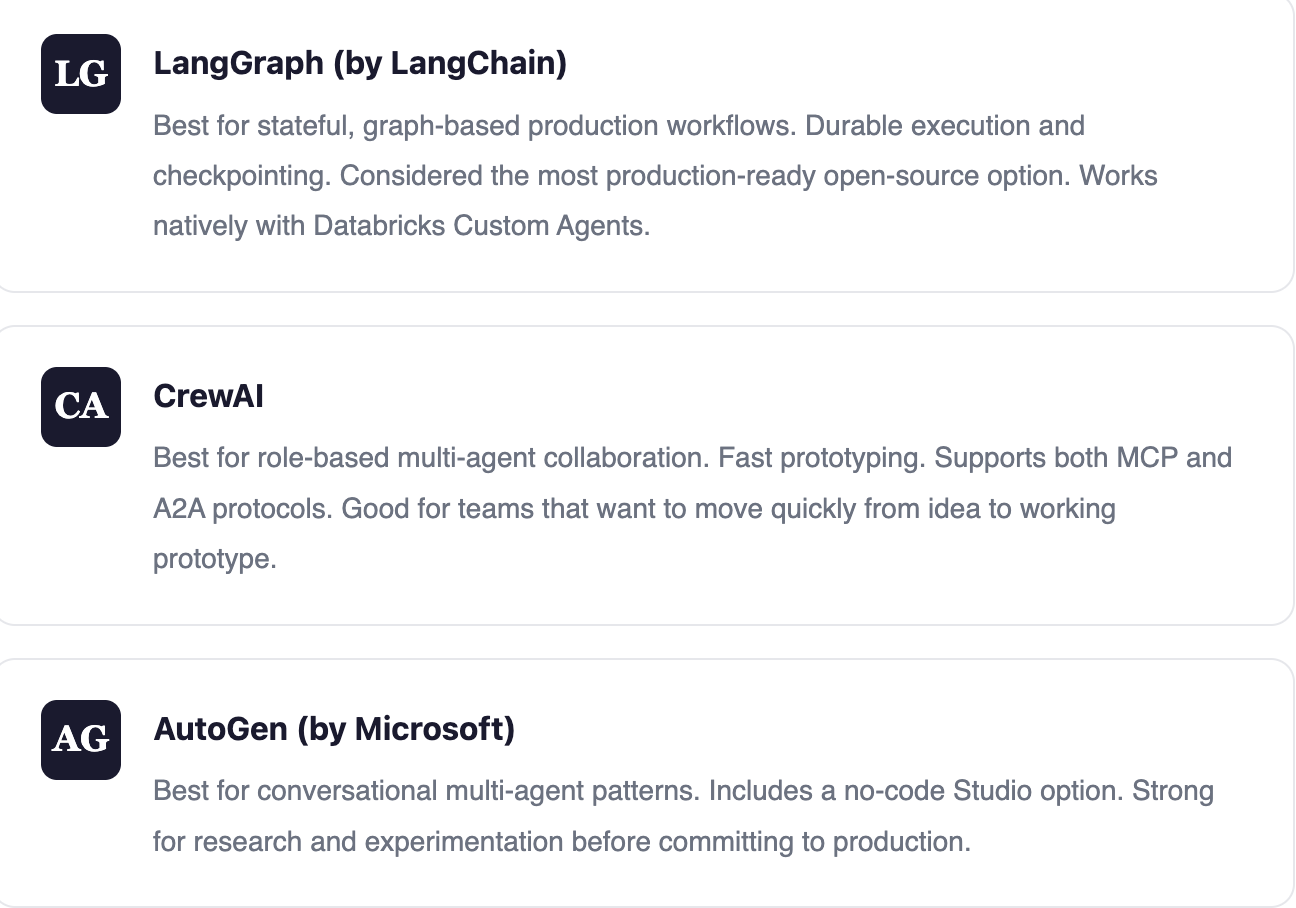

Open-Source Frameworks That Actually Work

Before you commit to a platform, know that some of the most production-ready agent frameworks are open source. They won’t replace a platform, but they handle the agent logic layer and they give you portability.

The critical distinction

Open-source frameworks give you agent logic. They don’t give you production infrastructure - deployment, monitoring, security, governance, memory. That’s what enterprise platforms provide. The pragmatic approach: use open-source frameworks for agent logic, deploy on an enterprise platform for everything else. Databricks Custom Agents explicitly supports this pattern - build with LangChain locally, deploy to Databricks Apps without rewriting code.

What This Means for Pharma, Healthcare, and Manufacturing

Generic platform comparisons miss the constraints that regulated industries face. Let me get specific about the industries I work in.

Pharma

Top priority: Governance and validation

Any AI agent that touches GxP-regulated processes needs audit trails, version control, and validated infrastructure. AWS (GxP compliance tooling) and Databricks (Unity Catalog lineage) are strongest here. Salesforce Agentforce is ideal for commercial/sales ops but doesn’t cover manufacturing or quality.

The emerging use case: Multi-agent systems where a drug safety agent (monitoring adverse events) coordinates with a regulatory submission agent (preparing FDA filings) and a commercial agent (adjusting physician outreach based on safety signals). No single platform handles all three today. That’s why protocol interoperability matters.

Healthcare

Top priority: HIPAA compliance + clinical data integration

Microsoft has the strongest healthcare story (Teams for Health, Nuance/DAX integration, Azure Health Data Services). AWS is a close second. Google’s multimodal capabilities - an agent that processes medical imaging alongside clinical notes -- are uniquely valuable for clinical AI.

The cautionary note: We just saw what happens when AI prescribes medications without adequate safeguards (the Doctronic debacle in Utah). Any healthcare AI agent must have robust human-in-the-loop capabilities. Google’s mid-workflow pause and Salesforce’s Atlas hybrid reasoning (LLM + business rules) address this directly.

Manufacturing

Top priority: OT/IT integration + real-time processing

Manufacturing agents need to interact with PLCs, SCADA systems, MES platforms, and IoT sensors. None of the five platforms handle this natively - you’ll always need integration middleware. AWS (IoT Core + AgentCore) and Google (multimodal + BigQuery for sensor data) are closest.

The Siemens + NVIDIA angle: Their partnership to build AI-driven manufacturing sites using digital twins is creating a parallel ecosystem. Manufacturing AI agents may ultimately run on industrial platforms (Siemens Xcelerator, Rockwell Plex) rather than cloud-native agent frameworks. PepsiCo is already seeing 20% throughput gains with this approach. Watch this space closely.

Most organizations won’t use just one platform.

A pharma company might use Agentforce for commercial operations, Databricks for manufacturing analytics agents, and AWS for GxP-validated quality agents. A healthcare system might run Copilot Studio for administrative workflows, AWS for clinical AI, and Google for medical imaging agents.

Multi-platform is the reality. Which is why interoperability protocols (MCP, A2A) will matter more than any single platform’s feature list within 18 months.

And here’s the truth that no vendor will tell you:

The platform is 20% of the effort. The other 80% is data readiness, integration architecture, governance design, and change management. Get the 80% right, and almost any platform will work. Get it wrong, and the best platform in the world won’t save you.

We’ve seen this pattern at Customertimes across every industry we serve. The teams that succeed don’t start with “which platform should we use?” They start with “what does our agent need to do, and is our data ready for it?”

That question sounds simple. Answering it honestly is the hardest part of any AI agent project.

The Checklist: Before You Choose a Platform

Run through these before you make a decision:

Map your data landscape. Where does the data your agents need actually live? This determines 60% of the platform decision.

Define who builds and maintains. Technical team? Business users? Both? Match the platform’s abstraction level to your people.

Assess cross-boundary needs. Will agents need to communicate with systems outside your organization? If yes (or “probably within 18 months”), prioritize MCP/A2A support.

Check regulatory requirements. GxP? HIPAA? OT security? Not all platforms have equal compliance tooling. This is non-negotiable in regulated industries.

Plan for multi-platform. Don’t try to force everything into one platform. Identify which platform serves which use case, and design the interoperability layer from Day 1.

Invest in the 80%. Before you spend a dollar on platform licensing, make sure your data is clean, your integrations are mapped, and your governance framework exists.