The 5 Platforms Fighting for Enterprise AI Agents

Databricks, Salesforce, Microsoft, AWS, and Google all launched enterprise agent frameworks in rapid succession. Here’s what each one actually does, from someone who deploys them in regulated environments.

Every major cloud and enterprise platform now has an AI agent framework. They all claim to be “production‑ready.” Having deployed AI agents across pharma, healthcare, and manufacturing, I can tell you most of them aren’t there yet for regulated settings.

The Agent Arms Race Is Real

Something shifted in early 2026.

For two years, “AI agents” were mostly a buzzword.

Then, over the span of a few months, everything dropped at once:

Databricks launched Custom Agents and the broader Agent Bricks stack.

Salesforce announced Agentforce 360 with autonomous multi‑step workflows.

Microsoft rolled out multi‑agent orchestration and enhanced governance in Copilot Studio and Azure AI Foundry.

AWS expanded Amazon Bedrock into AgentCore with a more modular architecture.

Google enhanced Vertex AI Agent Builder and its Agent Builder SDK with MCP and emerging agent‑to‑agent protocols.

Every major platform now offers a production agent framework. The messaging is nearly identical: “Build, deploy, and govern AI agents at scale.”

But the implementations are very different. And for enterprise teams trying to ship AI agents in regulated industries, where “move fast and break things” gets you a consent decree, those differences matter enormously.

Let me break down each platform, not from a feature‑list perspective, but from the perspective of someone who has to make these things actually work.

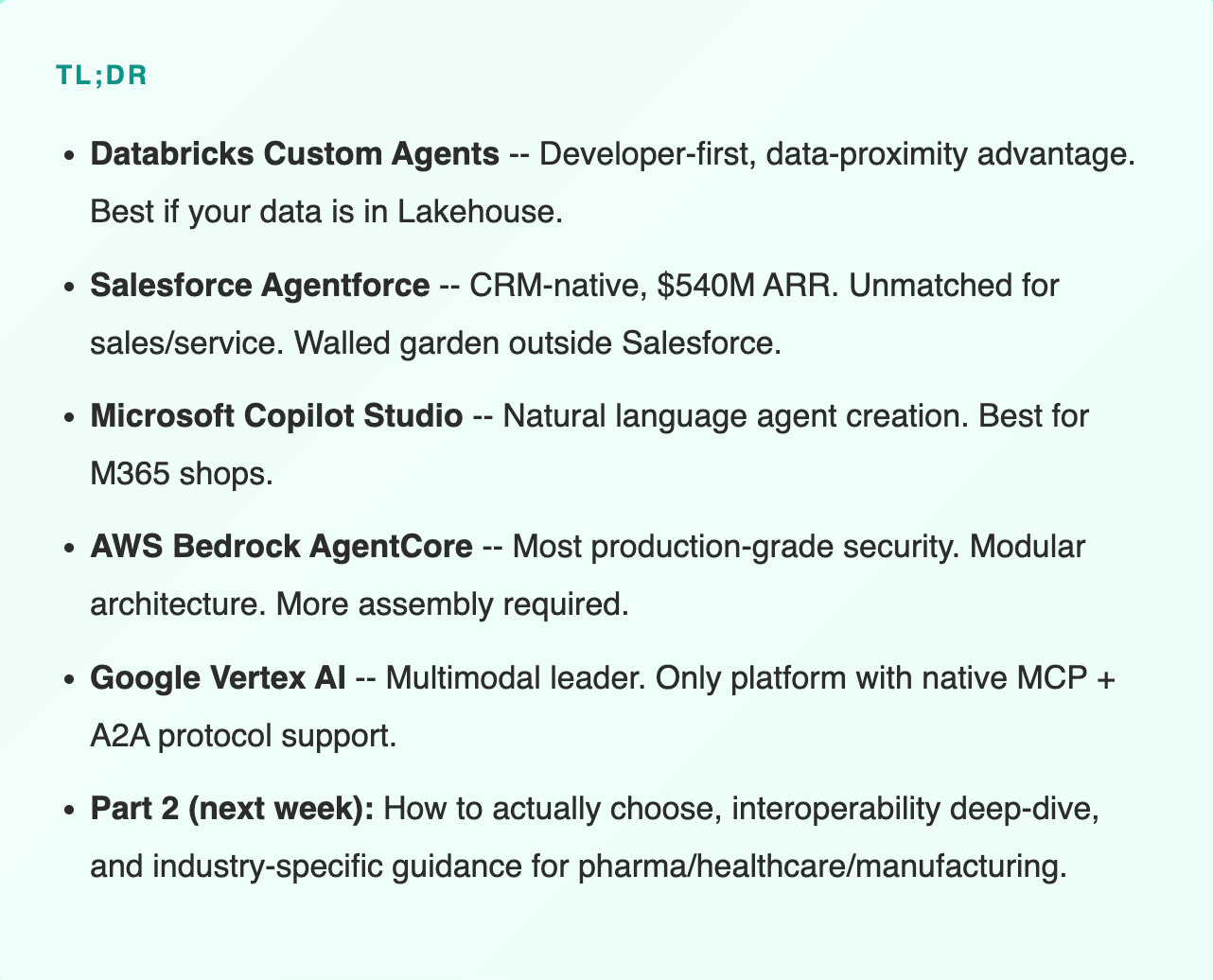

Databricks Custom Agents

The data‑first agent platform

Databricks Custom Agents let developers build, test, and deploy AI agents as fully managed Databricks Apps. It’s the centerpiece of the broader Agent Bricks suite.

Key capabilities

Framework‑agnostic: Build with LangChain, CrewAI, or raw Python, and deploy via CI/CD without rewriting code. Most platforms force you into proprietary tooling; Databricks doesn’t.

Lakehouse‑native memory: Agent state and conversation history persist across sessions directly in the Lakehouse, reducing the need for a separate memory database layer.

MCP catalog and marketplace: Early Model Context Protocol (MCP) integration so agents can discover and use tools from a curated catalog and marketplace.

Agent Bricks (no‑code): Natural language agent creation with templates for common enterprise tasks: the business‑user layer.

Unity Catalog governance: Every agent, tool, and data access point governed through the same catalog that manages the rest of your data estate.

My take from the field

The strongest play is the data story. If your data already lives in the Databricks Lakehouse, Custom Agents give you one of the shortest paths from data to agent. No heavy ETL, no large‑scale duplication. The agent sits directly on top of the data it needs.

In pharma and manufacturing, this matters. When a quality management agent needs to access batch records, deviation reports, and supplier data in real time, the fewer hops between data and agent, the fewer things break.

The framework‑agnostic approach also means your ML team uses what they already know. That alone can cut time‑to‑production by weeks.

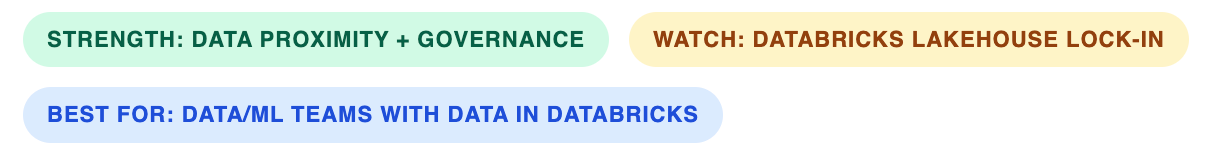

Salesforce Agentforce

The CRM‑native agent ecosystem

Agentforce is no longer just a feature. Salesforce has been rebuilding its architecture around agents. It is already the commercial leader in CRM‑native agents, with Agentforce contributing meaningful, fast‑growing ARR.

Key capabilities

Atlas Reasoning Engine: Hybrid reasoning that balances LLM creativity with structured business rules. The agent doesn’t just generate text; it follows your business logic.

Agent Script: JSON‑based scripting for conditionals, hand‑offs, and guardrails, making implicit process logic explicit.

Data Cloud grounding: Agents operate on structured, unified CRM data. They know your customers, pipeline, and cases, not just what’s in the latest prompt.

Agentforce Voice: Real‑time voice agents integrated with Amazon Connect, Five9, and Genesys.

Flexible pricing: Usage‑based and per‑user models, typically combining per‑conversation charges, per‑action “Flex Credits,” and an optional per‑user/month tier for heavier or unlimited internal usage. (Exact numbers change frequently; check the current Agentforce pricing page or recent SaaS analyses rather than relying on static figures.)

My take from the field

For sales, service, and marketing, Agentforce is the most mature option right now. The CRM data grounding gives agents context that other platforms can’t match without massive integration work.

For pharma commercial teams, it’s compelling. An agent that pulls a physician’s prescribing history, checks compliance restrictions, drafts personalized outreach, and schedules follow‑up, all within Salesforce, is a real, shippable workflow.

The limitation is real, though. The moment your agent needs to do something outside Salesforce - query a manufacturing execution system, pull data from a LIMS, check OT network status, you’re in integration territory (MuleSoft or equivalent). That’s additional cost, complexity, and risk.

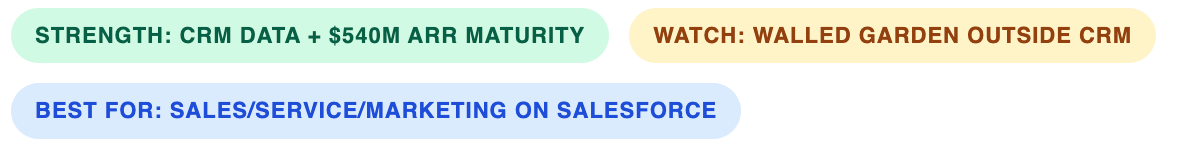

Microsoft Copilot Studio + Azure AI

The productivity suite agent layer

Microsoft’s approach is two‑pronged: Copilot Studio for low‑code agent building, and Azure AI Foundry for developers. The Microsoft 365 integration is the moat.

Key capabilities

Multi‑agent orchestration: Agents can call other agents as tools. A “project manager” agent delegates to a “data analyst” agent and a “report writer” agent, coordinating a multi‑step workflow.

Centralized governance: Entra ID‑based identities, policies, and monitoring for agents across Microsoft 365 and Copilot Studio.

Natural language creation: Describe what you want; the platform scaffolds an agent and workflow, with no coding required for common patterns.

Model flexibility: Support for Claude, a wide range of models through Azure AI Foundry, and bring‑your‑own‑model options.

My take from the field

If your organization lives in Microsoft 365, and most enterprises do, Copilot Studio is often the path of least resistance. The agent is already where your people work: Teams, Outlook, SharePoint, Excel.

For healthcare organizations on Microsoft infrastructure, an agent that pulls patient scheduling from Dynamics, checks formulary info in SharePoint, and drafts a summary in Word, all without leaving the ecosystem, cuts integration complexity significantly.

The challenge is cross‑application autonomy. The moment your agent needs to act outside the Microsoft stack (and in manufacturing or pharma ops, it almost always will), you’re back to custom integrations.

AWS Bedrock AgentCore

The infrastructure‑grade agent runtime

AWS has expanded Amazon Bedrock into AgentCore - a modular service architecture designed for production at scale. This is the infrastructure‑first play.

Key capabilities

Modular services: Distinct components for runtime (serverless deployment), gateway (unified tool and model access), memory (context retention), identity (auth), policy (Cedar‑based access control), and observability (OpenTelemetry monitoring).

Pay‑per‑use economics: No per‑seat licensing; you pay for actual consumption: models, calls, and underlying infrastructure.

Cedar policies: You express access control in a human‑readable way, and the system compiles that into formal Cedar policies already used in other AWS security services.

Broad model selection: Multiple foundation models through Bedrock, plus integrations with partner and open‑weight models.

My take from the field

AWS is the most “production‑grade primitives” option. If you need enterprise security, monitoring, and compliance at scale, AgentCore gives you granular control over how agents run, what they can touch, and how they’re audited.

For pharma companies that already run validated workloads on AWS, keeping agents inside the same security and compliance perimeter is a big advantage. Cedar is particularly interesting for regulated industries that need explainable, formalized access control.

The trade‑off: this is the most “build it yourself” option. You get powerful building blocks, but assembling them into a working agent system takes more engineering than Salesforce or Microsoft.

Google Vertex AI Agent Builder + SDK

The multimodal + interoperability leader

Google centers its agent platform on Vertex AI Agent Builder and its associated SDK. Two things stand out above everything else: multimodal capabilities and a strong bet on interoperability.

Key capabilities

Native MCP and A2A‑oriented design: Among the big clouds, Google is the most aggressive on interoperability, with first‑class Model Context Protocol (MCP) support and early patterns for agent‑to‑agent communication.

Multimodal agents: Gemini 3 Pro‑class models process text, audio, video, and images natively, with very large context windows. Agents can “see” and “hear,” not just read and write.

Agent Engine for memory: Short‑term and long‑term memory with topic‑based organization so agents remember what actually matters across sessions.

Human‑in‑the‑loop: Agents can pause mid‑workflow, request human input, and then resume with full state preserved.

My take from the field

Google’s interoperability story is the most forward‑leaning. When your pharma manufacturing agent needs to communicate with your supply‑chain vendor’s agent, protocol standards matter. Google is betting on being the Switzerland of agent interoperability.

The multimodal capabilities are uniquely valuable in manufacturing. An agent that processes real‑time video from a production line, detects visual defects, cross‑references quality specs, and triggers an alert requires native multimodal processing. No other platform does this as cleanly right now.

The downside is the usual one with Google Cloud: if you’re not already on GCP, the adoption friction is real.

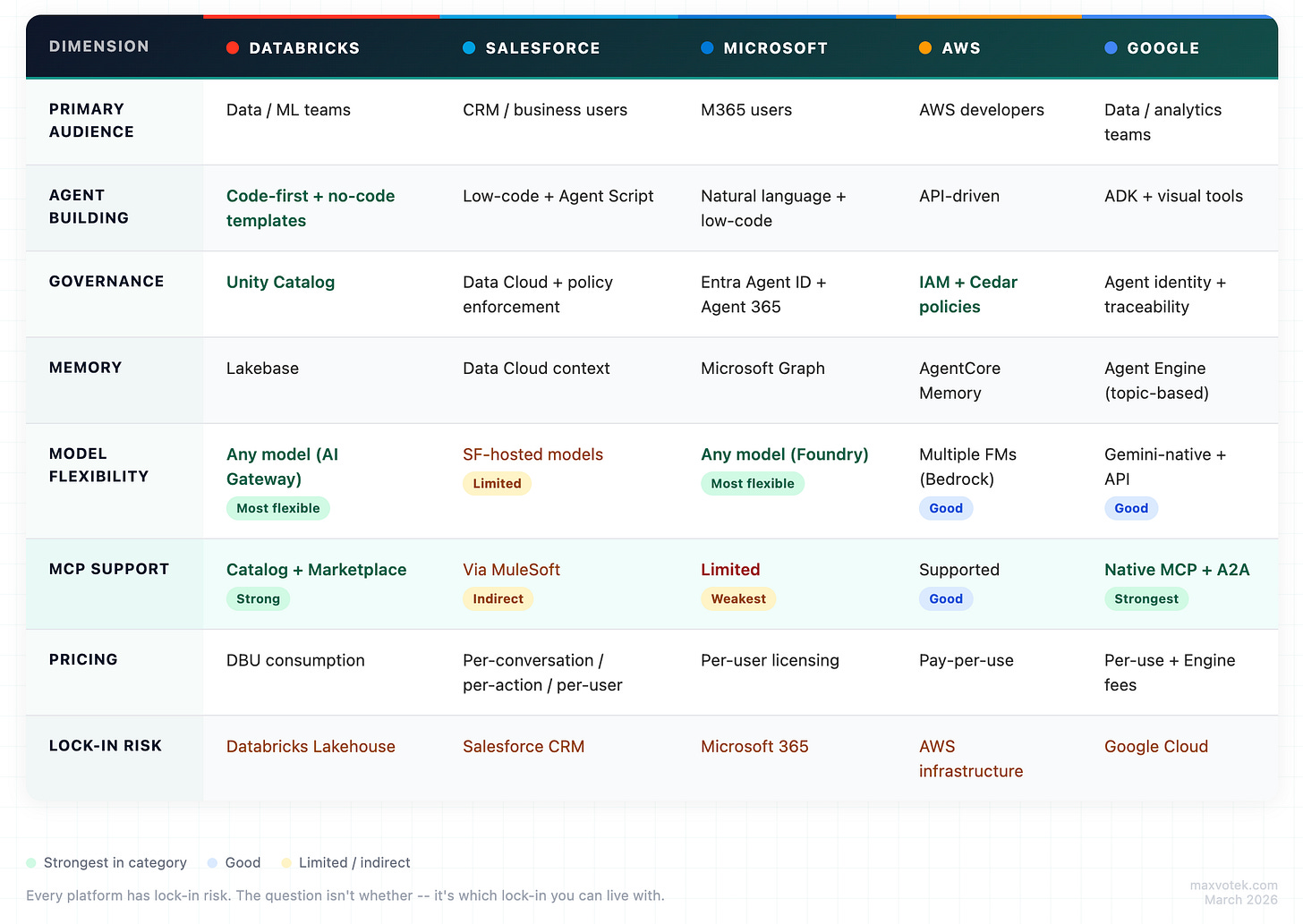

The Comparison at a Glance

The Pattern You Can’t Ignore

Every single platform has lock‑in risk. That isn’t a bug; it’s the business model.

The real question isn’t whether you’ll be locked in, but which lock‑in you can live with given where your data and workflows already reside.

More on that in Part 2.

What I’m Not Telling You Yet

This comparison tells you what each platform does. It doesn’t tell you which one to pick, because that depends on factors the feature lists don’t cover:

Where does your data actually live (Delta on Databricks, Salesforce Data Cloud, S3, BigQuery, on‑prem)?

Who builds and maintains the agents in your org (central ML team, line‑of‑business admins, external SI)?

Do your agents need to talk to agents outside your company (CDMOs, logistics providers, payers, partners)?

What does “production” mean in your regulatory context (GxP validation, HIPAA, FDA scrutiny, internal audit)?

Those are the questions that matter. And that’s exactly what Part 2 covers.